Microsoft Research unveiled AgentRx on March 12, 2026, an open-source framework that automatically diagnoses why AI agents fail during complex tasks. The tool pinpoints the exact step where an agent’s process becomes unrecoverable, achieving 23.6% better accuracy than existing methods in tests on 115 failed AI trajectories.

The framework treats AI agent execution as a system trace that can be validated, providing developers with an evidence-backed audit trail for debugging complex failures, according to Microsoft Research.

AgentRx operates through a three-stage diagnostic pipeline. First, it generates executable constraints that define correct agent behavior by synthesizing rules from tool schemas like OpenAPI specifications and domain policies expressed in natural language. The framework then systematically replays the agent’s complete trajectory, evaluating each action against these constraints. When violations occur, it identifies the first unrecoverable step as the “critical failure,” allowing developers to focus on the precise origin rather than downstream effects.

Performance Validation

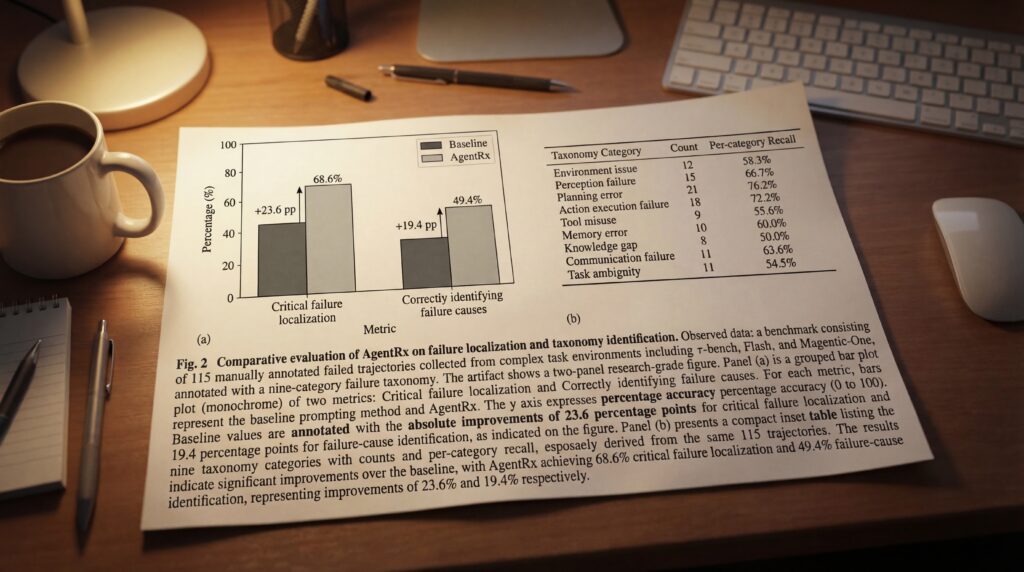

Microsoft Research developed the AgentRx Benchmark to validate the framework’s effectiveness, creating a dataset of 115 manually annotated failed trajectories from complex task environments including τ-bench, Flash, and Magentic-One. The annotation process yielded a nine-category failure taxonomy that includes issues such as plan adherence failures and invention of information not present in observations.

Testing demonstrated significant improvements over existing LLM-based prompting baselines. AgentRx achieved a 23.6% absolute improvement in critical failure localization and a 19.4% absolute improvement in correctly identifying failure causes according to the taxonomy, Microsoft Research reported.

Market Impact

The open-source release of both the framework and annotated benchmark positions Microsoft at the forefront of making AI agent debugging more systematic and evidence-driven. The tool addresses a critical bottleneck in AI development as enterprises increasingly deploy autonomous agents for complex tasks.

By providing precise, auditable diagnostics, AgentRx enables developers to build more transparent and reliable AI systems. Microsoft Research invited the community to utilize these tools for their own agent workflows and contribute to the growing knowledge base of failure constraints.

While the framework shows promising results on tested architectures, its performance on agent systems or failure modes not represented in the benchmark remains unexplored, suggesting opportunities for future development and expansion of the diagnostic capabilities.

Sources

- microsoft.com/en-us/research/blog