Researchers from Nature Machine Intelligence published a study Monday challenging how artificial intelligence speech recognition systems are tested, arguing that current methods fail to account for the multiple valid ways human speech can be transcribed. The team, led by Mona Sloane, proposes replacing traditional single-answer accuracy tests with a new framework that incorporates input from diverse stakeholders—including users, developers, and affected communities—to better evaluate whether these AI systems work fairly and effectively across different contexts.

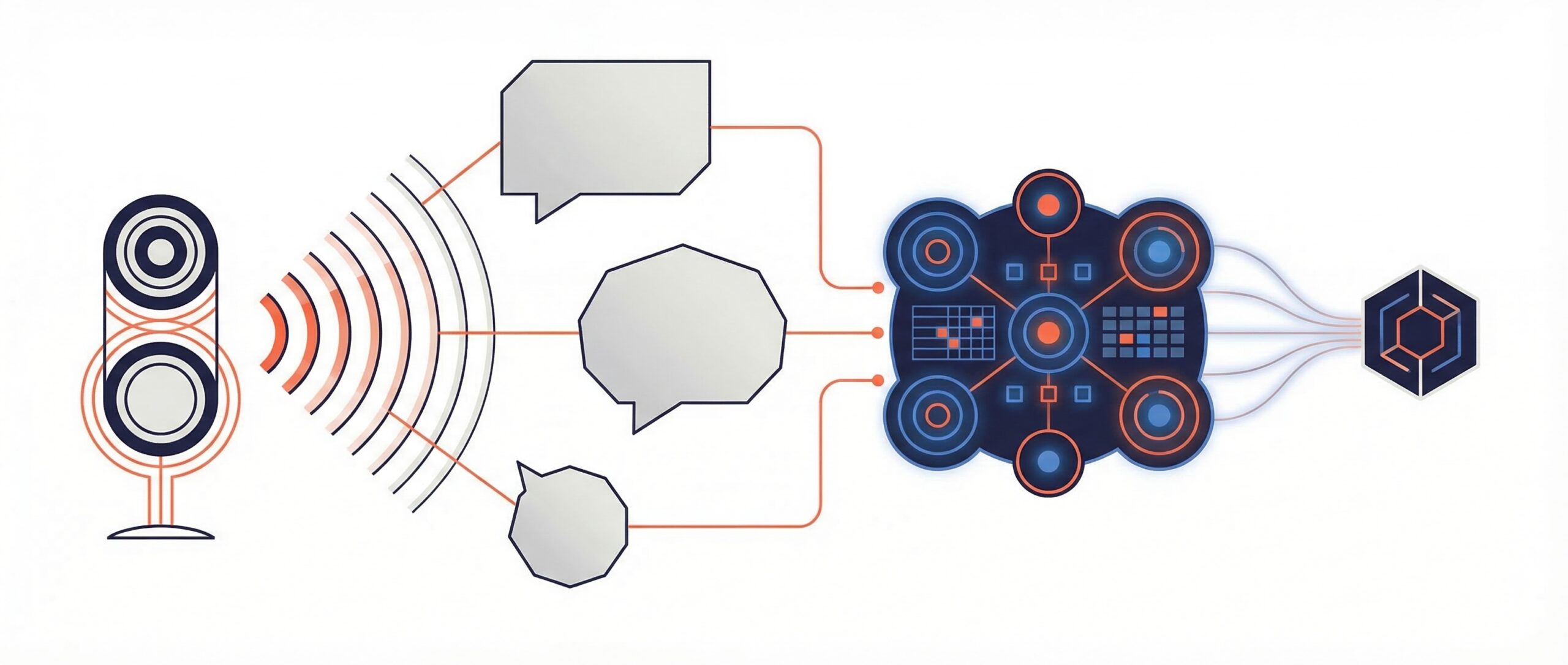

The problem lies in how accuracy is measured. Traditional automatic speech recognition (ASR) testing relies on metrics like Word Error Rate (WER) and Character Error Rate (CER), which compare system outputs against a single reference transcription. But according to the researchers, this approach ignores a fundamental reality: there’s often no single correct way to transcribe human speech.

Consider a medical consultation where a patient stutters or uses filler words like “um” and “uh.” For clinical analysis, preserving these speech patterns in the transcription could be crucial for diagnosis. Yet for generating meeting summaries or closed captions, a cleaned-up version without these elements would be more appropriate. Both transcriptions are valid, the study argues, which undermines the traditional notion of one objective “ground truth.”

A New Stakeholder-Driven Approach

The research team, led by Mona Sloane, proposes shifting from single-reference accuracy tests to a stakeholder-driven auditing framework. This model would bring together end users, developers, domain experts, and affected communities to collectively determine what constitutes fair and useful speech recognition output for specific contexts.

Rather than simply measuring deviation from a predetermined correct answer, this approach would facilitate structured dialogue about an AI system’s real-world performance and impact. The framework prioritizes contextual appropriateness over raw accuracy scores, potentially leading to more equitable speech recognition technologies that better serve diverse user groups.

Industry Implications

The proposed changes could significantly impact how tech companies develop and market speech recognition systems. Current industry standards heavily rely on traditional accuracy metrics for product comparisons and regulatory compliance. A shift to stakeholder-driven evaluation would require new benchmarks and potentially higher development costs.

However, critical details about implementation remain unclear. The Nature Machine Intelligence article, published March 16, 2026, has not yet been made fully accessible, leaving questions about practical adoption challenges, scalability concerns, and specific policy recommendations unanswered. The research team includes co-authors H. Schellmann and K.X. Mei, though their full framework specifications await broader release.

As AI speech recognition becomes increasingly integrated into healthcare, education, and legal systems, this rethinking of evaluation methods could reshape how these critical technologies are developed, tested, and deployed across industries.

Sources

- doi.org