Researchers have unveiled a new framework that dramatically improves how robots plan and execute complex physical tasks, according to findings released today. The Video-to-Spatially Grounded Planning (V2GP) system outperformed existing methods by combining high-level planning with spatial awareness in a single model, rather than handling these functions separately. Tests using the newly created GroundedPlanBench benchmark showed the integrated approach significantly boosted robots’ ability to complete multi-step manipulation tasks in real-world environments.

The breakthrough addresses a fundamental challenge in robotics: enabling machines to understand both what to do and precisely where to act in cluttered, real-world environments. According to the Microsoft Research Blog, traditional approaches suffer from a critical flaw when translating high-level instructions into physical actions.

Current robotic systems typically use a two-step process that first generates text-based plans like “put a spoon on the white plate,” then attempts to locate the specific objects. This decoupled approach creates ambiguity problems. When multiple similar objects exist in a scene, vague language instructions often cause robots to repeatedly select the wrong item, leading to task failure.

The new grounded planning approach eliminates this intermediate language step entirely. Instead of producing text instructions, the system directly outputs actions paired with precise spatial coordinates, making it significantly more reliable in complex environments, researchers reported on the project website.

Performance Breakthrough

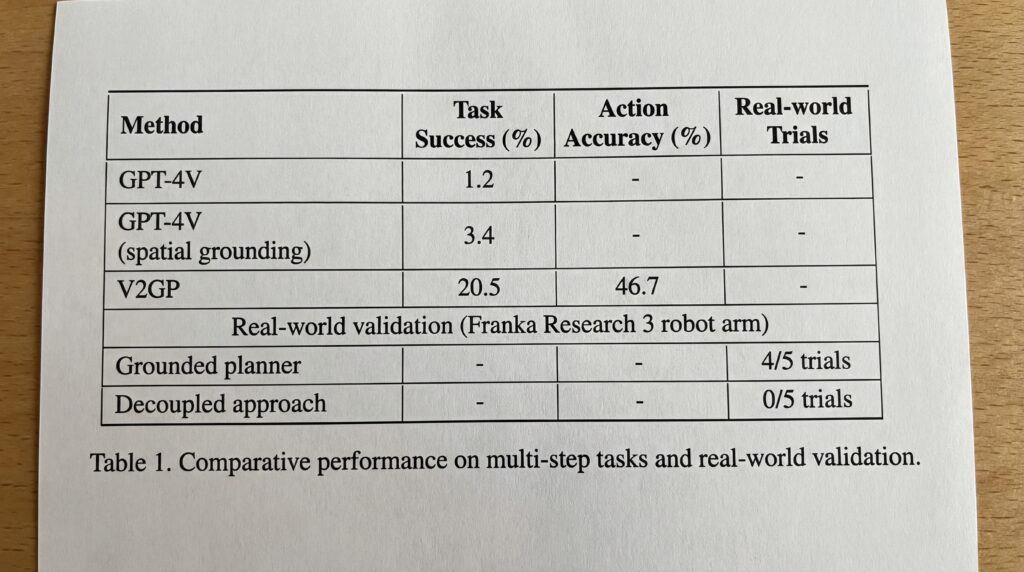

Testing revealed dramatic performance gaps between the approaches. On the GroundedPlanBench, state-of-the-art models like GPT-4V achieved only a 1.2% success rate on tasks requiring five to eight actions, according to the research findings. Even when combined with specialized spatial grounding models, performance barely improved to 3.4%.

In contrast, models trained with the V2GP framework achieved 20.5% task success and 46.7% action accuracy on the same complex tasks, representing a sixfold improvement over existing methods.

Real-world validation using a Franka Research 3 robot arm demonstrated even starker differences. The grounded planner successfully completed test tasks in four out of five trials, while the traditional decoupled approach failed every attempt, primarily due to spatial grounding errors.

Industry Impact

The development could accelerate deployment of robots in warehouses, manufacturing, and service industries where machines must navigate unpredictable environments. However, the researchers acknowledge current limitations, noting that the benchmark dataset and trained models are not yet publicly available.

Future development will focus on integrating world models that allow robots to predict action consequences before execution, potentially creating more deliberative systems capable of reasoning about cause and effect in physical spaces, according to the Microsoft Research Blog.

Sources

- microsoft.com/en-us/research/blog

- groundedplanning.github.io