The breakthrough addresses a critical challenge that has plagued artificial intelligence adoption in healthcare and other sensitive sectors: the inability of AI systems to clearly articulate their reasoning. Published at the International Conference on Learning Representations (ICLR) 2026, the research demonstrates how the new system can identify and name the specific visual features it uses when diagnosing skin lesions or classifying bird species, according to MIT News.

Led by Antonio De Santis of the Polytechnic University of Milan and MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), the research team developed a four-stage pipeline that fundamentally changes how AI explanations work. Rather than forcing models to use human-defined concepts that may not align with their actual decision-making process, the system extracts concepts the AI has already learned are relevant.

Technical Breakthrough

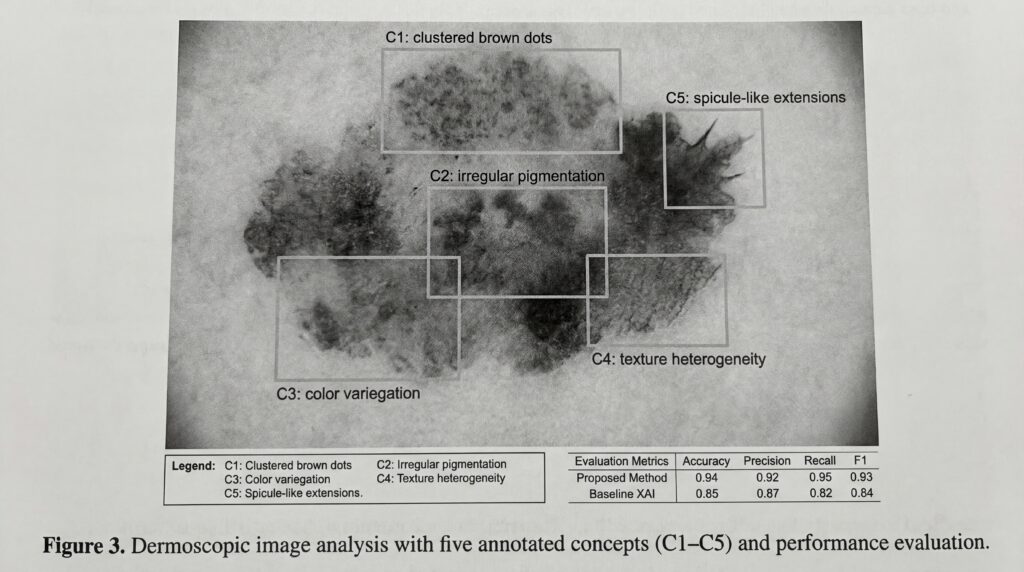

The M-CBM uses a Sparse Autoencoder to analyze pre-trained models and identify their most critical learned features. A multimodal language model then automatically generates natural language descriptions for each discovered concept, creating a direct bridge between complex internal representations and human understanding. The system restricts itself to using just five concepts per prediction, ensuring explanations remain digestible while maintaining accuracy.

In testing on medical image analysis and species identification tasks, the M-CBM achieved higher accuracy than existing explainable AI methods while producing more precise explanations, according to the research paper. When analyzing skin lesions, for instance, the system can specify it detected features like “clustered brown dots,” allowing doctors to evaluate whether to trust its diagnosis.

Market Impact and Limitations

Despite the advancement, significant challenges remain. “Black-box models that are not interpretable still outperform ours,” De Santis acknowledged, highlighting the persistent trade-off between accuracy and interpretability. The team also warned of potential information leakage, where models might “secretly use concepts we are unaware of,” potentially undermining explanation reliability.

The implications for healthcare AI deployment are substantial. As regulatory pressure mounts for transparent AI systems in medical settings, tools like M-CBM could accelerate adoption by providing the accountability clinicians and regulators demand. The researchers plan to scale the method using more powerful language models to further close the performance gap with non-interpretable systems.