Databricks announced the general availability of Serverless Workspaces for Microsoft Azure on March 13, 2026, eliminating the need for customers to manage cloud infrastructure when running data analytics and AI workloads. The new deployment model, available across multiple Azure regions, enables organizations to create Databricks environments in seconds with automatic scaling and per-second billing, though it requires Unity Catalog and lacks customer-managed networking options.

The new deployment model represents a fundamental shift in how enterprises handle data infrastructure, moving compute resources from customer-managed Azure Virtual Networks to a Databricks-managed multi-tenant environment. According to the Databricks Blog, this architectural change eliminates the need for organizations to configure VNets, allocate IP ranges, or manage firewall rules and NAT gateways.

The serverless offering supports all major Databricks workloads, including notebooks, workflows, Databricks SQL, Delta Live Tables, and model serving, all powered by the high-performance Photon engine. The service launched across 12 Azure regions, including Australia East, Canada Central, Central US, East US, East US 2, France Central, Germany West Central, North Europe, UK South, West Europe, West US, and West US 3.

Pricing Structure and Cost Implications

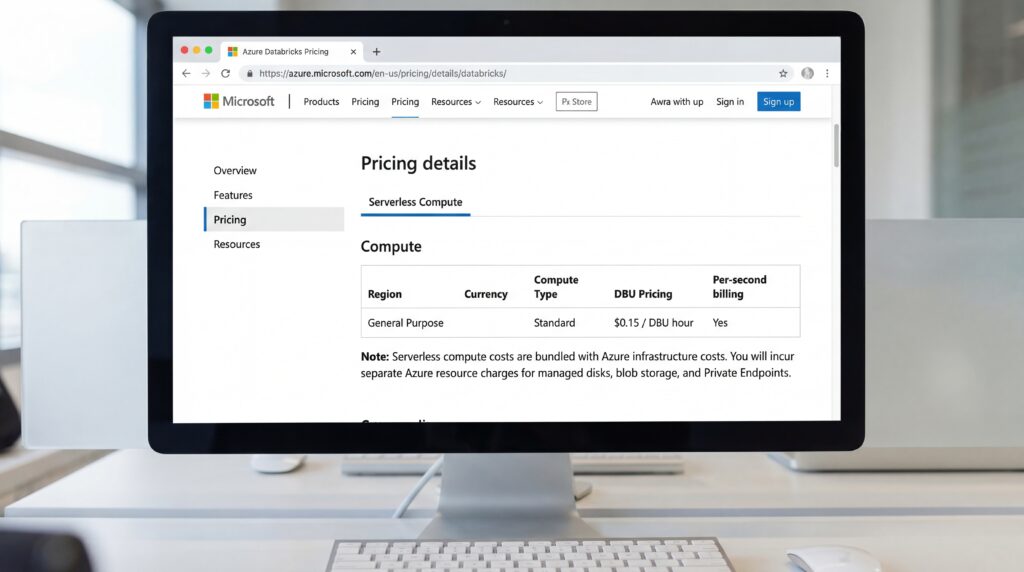

The economic model differs significantly from traditional provisioned clusters. Organizations pay a higher Databricks Compute Unit (DBU) rate that includes underlying Azure infrastructure costs, eliminating separate virtual machine charges, according to Azure Databricks Pricing documentation. The per-second billing ensures companies only pay for active compute time, potentially offering substantial savings for intermittent workloads.

Microsoft Learn documentation indicates that while compute costs are bundled, customers remain responsible for related Azure resources such as managed disks, blob storage, and networking components like Private Endpoints. For workloads with variable or spiky demand, the serverless model could prove more cost-effective than maintaining idle clusters.

Technical Limitations and Migration Challenges

Despite its advantages, the serverless architecture comes with notable constraints. Organizations cannot select specific VM instance types, as Databricks automatically manages resource allocation. The requirement for Unity Catalog means companies must adopt Databricks’ governance framework before accessing serverless features.

Critically, there is no direct upgrade path from Classic to Serverless Workspaces. According to Databricks documentation, organizations must redeploy assets to new serverless environments, though Unity Catalog ensures consistent data access across both models. The lack of customer-managed VNet injection means enterprises requiring specific firewall rules or on-premises connections must maintain Classic Workspaces for those workloads.

The release positions Databricks to compete more effectively in the cloud data platform market, where simplified management and consumption-based pricing have become key differentiators. For data teams struggling with infrastructure overhead, the serverless model promises to accelerate time-to-value while maintaining enterprise-grade security through Azure Active Directory integration and Unity Catalog’s role-based access controls.

Sources

- databricks.com/blog

- azure.microsoft.com

- learn.microsoft.com